ABSTRACT

This paper offers an ontological perspective to the notion of Form and explores the organization of nature in terms of processes of energy transfer and encoding that lead to the creation of information records. It is proposed that form accounts for the basic unity of information processing, inasmuch as it possesses a triadic nature that includes both the digital and analog components of information plus the intrinsic activity of their conversion process. Form expresses the indissoluble semiosis of processes and structures, and provides an interpretation of the mapping of the space of digitally encoded information into the space of analog information. This relationship in natural systems is made possible by ‘work-actions’ executed by semiotic agents. A discussion inspired by these relations of informational spaces is employed to illustrate how Peirce's categories that converge in the notion of form provide conceptual tools for understanding the hierarchical organization of nature. These informational spaces find their most outstanding example in the study of sequence and structure correlations in RNA and proteins. The mapping between sequence and shape spaces also shows the current need to expand the central dogma of molecular biology.

1 THE NOTION OF FORM

Life and form are two intimately associated terms such that one can hardly imagine one without the other. In Plato's terms forms are a priori, immutable and eternal ideas that explain the intelligible world because they remain unchanged, in contrast to the sensible world of variation and constant change. These intelligible and unchanging entities are ideas, eidos. that represent objective, real and universal states which have no concern whatsoever for individual, concrete and sensible entities. Forms confer an intelligibility that is independent from the concrete and sensible world experienced by every single individual. If forms had such epistemological priority and real existence separate from matter, it would leave unsolved the problem of how to achieve logical and ontological consistency without discarding the concrete and sensible world experienced by individuals.

Aristotle uses the expression of form as logos, account, formula or intelligibility, which is why we speak of formal reasoning or mathematical formalism. For Aristotle, matter and form are two metaphysical principles inseparable from each other. Matter cannot be reduced to formless atoms acted upon by external forces, but instead matter-form is a principle of activity and intelligibility better expressed in the notion of substance (Aristotle. Met.VII.15). Aristotle thought that intelligibility must be sought in the internal dynamics associated with morphogenetic processes that produce invariant forms, as he described in his observations on embryological process. For him nature is meant in the sense of a developing process that actualizes form whose properties are intrinsic to matter. That is, the priority of form and the formal cause over the other Aristotelian causes has to do with the fact that form operates from within, and so becomes the principle or cause of movement (Aristotle. Phys.III,1). Aristotle says that the three causes formal, efficient and final (i.e. form, source of change, and end) often coincide. The usual interpretation of this is that efficient cause is a form operating a tergo, and final cause a form operating a fronte. In many cases form, source of change, and end, coincide because when a form is a source of change, it is a source of change as an end. Thus Aristotle is a pioneer inasmuch as he seems to have anticipated the existence of a semiotic closed loop. In some cases, he seems not to discriminate between material cause and the source of change. In other cases, the formal cause and the end are considered as one and the same. However, his argument in terms of the four causes begs important issues in terms of living things. An explanation of the living requires simultaneous use of unconditional necessity (matter, source of change) and conditional necessity (form, end). In other words, Aristotle's presentation of the four causes implicitly states the complementarity of final and efficient causes for material processes, but marks form or formal cause as mediator between them.

While Aristotle accepted that a particular organ can takeover the functions of an impaired one in order to preserve the performance of the organisms, his debt to Plato’s notion of immutable ideas prevented him from envisioning the production of new forms. In Artistotle, embryological processes are confined to the ever-going production of fixed forms. However, Aristotle’s arguments about the concept of Form, as pattern and activity originating in a material system, permit him to integrate the argument of chance that cannot be proved, with the teleological hypothesis that cannot be disproved, as Kant stated in his Critique of Judgement.

According to Kant an internalist approach validates teleological judgement. Nature acts as if it were an intelligent being, for to accept final causality in some products of nature implies that nature is a cognitive being (Kant, KU § 4(65), 376 pp:24). If final causality cannot be disproved yet requires cognition, then the hypothesis of natural systems as cognitive cannot be disproved either. The relation between final causality and contingency is of reciprocity. There is a contingent way to knowledge from part to whole, and a necessary way from whole to part. (Kant, KU § 16 (77), 407 pp: 62). Hence, there is need for teleological judgement in order to explain contingency.

When we question how form emerges and is conserved along a defined lineage of descent, Buffon's answer elaborated under the influence of Newtonian philosophy, still provides an interesting insight (Buffon, in Canguilhem, 1976), (Jacob, 1982). Forms (a three dimensional arrangement of component parts) have something to do with shapes (external contour of organisms) that are produced by casting on moulds, in this case an inner mould constituted by 'the folding of a massive surface'. Even in the 1930's, biochemistry was not totally alien to such ideas, and it was not unusual to find references in the literature about molecules that shape each other as likely carriers of instructive information (Olby, 1994).

The real breakthrough of the molecular biology revolution in the 1950's was the concept that organisms could be reduced to digitally encoded one-dimensional descriptions. After this, DNA became the carrier of form, and became the informative molecule par excellence. Yet, it is not clear how forms and three-dimensional shapes get encoded in one-dimensional records. Nonetheless, the advantage of the digital view of information encoding is that linear sequence copying accounts for easy, rapid, efficient and faithful replication, a fact that was left unexplained in the notion of three-dimensional inner moulds. As I will discuss below, the success of DNA - centred view from the 1950's onwards is a consequence of a broad tendency in biology to place major stress upon digitally encoded information.

According to D'Arcy Thompson (1917) the form of any portion of matter (living or dead), and the sensible changes of form, that is, its movements and its growth, may in all cases be shown as due to the action of mechanical forces. The form of an object is the result of a composite 'diagram of forces', and therefore, from the form we can deduce the forces that are acting or have acted upon it. Although, ‘force’ is a term as subjective and symbolic as form, ‘forces’ are the causes by which forms and changes of form are brought about. D’Arcy Thompson constitutes the best attempt so far to reduce form to ‘forces’ in a world subject to friction while keeping the autonomy of form. Thompson´s attempt to eliminate intrinsic formal causes by working only with extrinsic efficient ones, results in an affirmation of the autonomy of form. The point he missed, however, is that ‘forces’ are not just mechanical interactions but are the directionality imposed on the flow of physical energy as a consequence of the interaction established between the living entities and its surroundings. Therefore, it is not possible to reduce form to ‘forces’ because, as was argued later, the inner constitution of living systems enter into an explanation of the directionality of the forces. A pure mechanical approach to form, while useful is insufficient to account for the directionality of forces.

This problem was dealt with in Whitehead´s philosophy of organism. For him, substance (actual entities) undergoes changing relationships and therefore, only form is permanent and immortal. Nevertheless organism or living entities are individual units of experience and can be regarded from either internal or external perspectives. The former (microscopic) is concerned with the formal constitution of a concrete and ‘actual occasion’, considered as a process of realizing an individual unit of experience. The latter (macroscopic) is concerned with what is ‘given’ in the actual world, which both limits and provides opportunity for the ‘actual occasion’. His remarkable insight is that actuality is a decision amid potentiality. The real internal constitution of an actual entity progressively constitutes a decision, conditioning a creativity that transcends that actuality. Conversely, where there is no decision involving exclusion, there is no ‘givenness,’ for example in Platonic forms. In respect of each actual entity there is ‘givenness’ of such forms. The determinate definiteness of each actuality involves an action of selection of these forms. Therefore, form involves actual determination or identity (Whitehead, 1969 99:151-297-372). Thus, the formal constitution of an actual entity could be construed as a semiotic process that mediates the transition from indetermination (potentiality) towards terminal determination (actuality). Consequently, theories of life had to incorporate thermodynamics, or the study of the flow of energy, in order to understand transformation in alternative forms.

With the advent of Prigogine's studies of dynamical systems far from equilibrium, the process aspect of form is highlighted and the question of accessibility to new forms arises. Prigogine's definition of dissipative structures provides an intellectual framework both for a theory of form and to evolutionary approaches that hereafter, become bound inevitably to the development of thermodynamics. In this light, Form as process becomes the only physical alternative to Leibniz's concept of "pre-established harmony" in "closed windowless monads" – a concept that has always troubled many philosophers. Since, communication implies openness, whatever harmony is achieved must have been paid for in terms of energy transactions. In the same manner, form constitutes the alternative to classical atomism, which, I will argue, presents itself anew in the guise of genetic reductionism.

Due to the growing influence of the mechanical conception of life during the nineteenth and twentieth centuries, the concept of form was gradually but subtlety replaced with ‘structure’. A structure is made to depend on a particular arrangement of component units whose physical nature can be characterized with high precision. In this way one type of structure will be defined for every kind of material arrangement. This mechanical concept induces us to imagine that for every functional activity performed by living organisms, a particular suitable structure will be required. No room is left for degeneracy, nor for cases in which a particular function can be executed by different but equally suited structural devices. Thus Kimura's neutral selection theory, about the existence of a wide variety of equally functional variants at the molecular level, does not fit into such schema. The phenomenon of pleiotropy, or the situation in which one structure can play more than one role according to the context or surrounding conditions, is also left unaccounted.

Following the same mechanical reasoning the phenomena of functional takeover, in which one structure takes over functions realized through another structure that is similar enough to it, was discarded. In the mechanical conception of life functions are discrete and cannot be accessed by structures initially required for the execution of different tasks. If there is no way a structure can takeover a functional action, it becomes even more difficult to imagine how a structuralist view can account for the origin of new functions. Structural degeneration, pleiotropy and functional takeover, these three aspects, are best explained with the notion of form. Form is also related with openness since it has a contextual character and because is not rigidly determined by its material composition. In this way forms can always surprise us by the unaccounted interactions that they can establish.

Today's information-centred paradigm in biology is biased inasmuch as it places a major emphasis on digitally encoded information (DNA) so introducing a reduction of a more broad and unifying concept present in the notion of form. I will argue that we cannot understand digitally recorded information without making reference to the whole network of interactions that is characteristic of form. I will also argue that the agency associated with pattern organization is present in the notion of form, and that the shift of perspective from digitally encoded information back to form, is in agreement with current semiotic perspectives being developed in natural sciences.

It is urgent to develop the notion of form since it is the only available alternative to the doctrine of replicator selfish genes. The latter suggests that life is nothing but DNA copping or the striving of digital informational texts to propagate themselves. At the one particular step of the process where gene copping is required, the selfish-gene view forgets all the informational components and energetic transactions.

2

FROM EPISTEMOLOGY TO ONTOLOGY:

THE TRIADIC ASPECT OF FORM

Peirce defined three universal and irreducible categories Firstness, Secondness and Thirdness that correspond at the same time to modes of being, forms of relation and elements of experience (phaneroscopy). He defined Phaneron as:

The collective total of all that is in anyway or in any sense present to the mind, quite regardless of whether it corresponds to any real thing or not (Peirce, C.P.7.528).

As shown below, these three categories, (quality, relation, representation) that imbricate or overlap one into another, prove to be useful in capturing the relation between digital and analog informational spaces. Therefore, these categories are needed to capture the relationships between the digital (sequence) and the analog (shape) spaces for the elements of experience that make representation possible. Below I will show how the concept of form - inasmuch as is associated with Peircian Thirdness - produces a ground where the secular polar pairs: analog and digital information, continuity and discontinuity, phenotype and genotype, macro and micro dynamics can be reconciled. The mediation between these polar pairs, which is a two way process, is semiotic.

Considering that previous accounts of form and informational processes usually have fallen into the epistemological realm, I want to propose a more ontological perspective grounded on the following points:

1.

Form has a triadic nature in terms of Peirce,

and stands for a basic unit system in which the categories of potentiality,

pattern and activity are intimately associated. Form, as stubborn fact,

has the qualitative aspect of Firstness, the functional, determinate and

relatedness traits proper of Secondness and, as a source of coordinating

activity and intelligibility, is also expression of Thirdness.

2.

Form is

a concept more general than structure for the same form can be

actualized in a plurality of structures. According to Bateson (1979) Form

or structure is understood as a relational order among components that minds

more than its material composition. By contrast I would characterize structure

as the material realization of form, inasmuch as structure is defined as

an arrangement of certain material components. Though forms are always associated with matter, they are

nonetheless independent of any particular material embodiment. ‘Structure’ can

be tackled by a mechanical approach that considers, for example, the molecule

itself as separate from its functional context; but any notion of form

also implies functionality of shape. While shape can be restricted to a

local zone, form also deals with the global dynamics that generates

pattern. Thus form is not exclusively circumscribed to any static

pattern as such for, as argued below, it includes a set of structures whose

global dynamics present a convergent shape.

3.

Form is prior to and a necessary condition of natural

interactions, thus form has to do with how systems experience their

external world. Form is also a quality of the observer (whole) and

consequently of the interacting and discriminating devices (parts) presented to

it. Both part and whole through natural interactions are forms.

Thus Form generates semiotic

agency.

4. Forms are not fixed. Forms as analog encoding can be reversibly modified by reciprocal fit induced by the presence of stimuli. But, forms as digital encoding can be irreversibly modified by mutations. The net result is that forms do evolve.

5. Forms are not abstract idealized Platonic patterns beyond the world of physical experience because they depend on energy landscapes that define their thermal stability and thermodynamically accessible states. Forms are like matrixes of temperature-dependent arrangement probabilities (i.e. energy landscapes of proteins and alosteric states). Nonetheless, energy landscapes can be modulated by the interaction with other natural systems or forms.

6. If the concept of form enters into close association with both the availability of energy flows and temperature stability thresholds; then, form is nothing but encoded energy, following the terms of Taborsky (2001).

Form indicates that structures are dynamic, processional, transient or produced in a morphogenetic process and responsible for semiotic agency inasmuch they are the seat of local interactions. Thus, I define form as the process that gives rise to the establishment, transfer and conservation of a specific set of non-random interactions. Thence, form generates functions that materialize in a specific space-temporal arrangement of parts, of whatever nature, required to maintain a coherent performance. This perspective favours the current shift of outlook, as noted by Matsuno and Salthe (1995), that emerging systems' characteristics are the outcome of inner measuring processes. For interactions to be established two conditions are required: i) Openness, and ii) Affinity or the non-random preference of one entity for another as selected from among others. The problem is that in order to knit together a whole network of interactions in the physical world, some basic forms inherent in elementary particles must have preexisted in order to make possible further recognition and increments in complexity. Therefore, priority should be given to form for its nature explains the appearance of digitally encoded semiotic records.

All of the above characteristics of Form can be made more approachable in biology through the concept of interacting phenotype. Phenotypes are much more than the set of characteristics that permit their classification in any taxonomic group, they are the actualization of form at the organismic level. Forms as phenotypes can exhibit some adjustments or accommodation as long as the interacting element is present, so that in a manner of speaking, subtle changes of form can be understood as analog encoding which can be further used, especially as a condition for digital encoding. This is precisely what Waddington defined as ‘genetic assimilation’, or the situation in which organisms select mutations that confer a variation that has already taken place in the presence of an external stimuli, so that by selecting them, a feature will develop, even in the absence of the said stimuli. This phenomenon can only come about as a result of selection exerted from within, in order to retain or fix them. Surprisingly as it may be, this internal cognitive activity does away with the suppositions of Lamarckian inheritance, for as Baldwin noted:

... if we do not assume consciousness, then natural selection is inadequate; but if we do assume consciousness, then the inheritance of acquired characters is unnecessary (Baldwin, 1896)[1].

Organic selection in terms of Baldwin (1896:441-451) can be interpreted as the operation of the organism as a semiotic agent, for the organism both participates in the formation of adaptations which it is able to accomplish, and also from the fact that, the organism is itself selected, since those organisms that do not retain their adaptations are said to be eliminated by the external force of natural selection.

In this context a dichotomy between innate and acquired features proves to be inadequate because any phenotypic feature results from an interaction between genetic and environmental factors. This phenomenon of the heredity of adaptations can also be explained by Peirce's notion of ‘habit’. For him, a habit is considered as being the higher probability to repeat in the future something that has taken place in the past, or within the naturalist perspective, the higher probability to respond in the future in the same way as it did in the past in the presence of certain stimuli. Adjustment happens when the reaction to the stimuli is not habitual (Peirce, C.P. 1.409). Thence, when the stimulus is removed and no longer present, the habit tends to affirm itself, so that whenever uniformity increases, Thirdness (habit) is at work. (Peirce, C.P. 1.415; 1.416). While habit and consciousness were traditionally considered as themes applied exclusively to describe the laws of mind, more recently they became part of the physical (molecular) explanation, thus paving the way, making a transition, from the laws of the mind to the laws of matter. Form and information (digital-analog) are not a priori properties, for they arise as a consequence of selection, but paradoxically in order to initiate the process of selection some forms must have existed to start with. Thus, form is to be understood as a principle of activity that organizes the world, through its dynamic geometry and interactions. This concept is in open contrast to the Newtonian view where absolute space-time is filled with passive geometricized entities. The concept of organic forms also paves the way toward hierarchical reasoning, since the interactions of a defined set of forms gives birth to higher order forms.

Along these lines, formal cause or the causative factor associated with form can be related to local dynamical organization. Final cause can be explained as the global tendency to produce consistency and regularity (Salthe, 1999). But forms mediate between energy and matter through encoding and also in a reverse direction, since entropy production is mediated by information. In a cyclical process, there are two different moments in the dissipation of entropy: from form to digitally encoded information (phylogeny, DNA replication and translation) and the reverse from digitally encoded information to form (ontogeny, protein folding). The closing of the loop implies that form as conveyor of forces is also the permanent begetter of efficient causes.

3 RELATIONSHIPS BETWEEN DIGITAL AND ANALOG INFORMATIONAL SPACES

I start by arguing, as stated by Bateson (1979) and developed by Hoffmeyer and Emmeche (1991), that informational records have a dual nature, digital and analog. The following attempt to visualize the relationship between digital and analog informational spaces will contribute to the elaboration of an ontological approach to form. In the last decade, statistical approaches to molecular evolutionary biology have promoted the definition of sequence and shape space concepts, with the aim to formalize the ways in which recorded information is manifested. Sequence space is a mathematical representation of all possible sequences of fixed length that can be imagined by permutation of their basic symbols. Sequence space is represented as a hypercube of n-dimensions in which every point stands for one sequence and the dimension of the cube corresponds to the length of the binary chain (Hamming, 1950). This representation was originally applied to proteins (Maynard-Smith, 1970), and later to RNA and DNA sequences (Eigen, 1986).

The construction of a shape-space is an effort to formalize and represent all possible shapes or structural conformations that the set of all chains of symbols of fixed length can attain, provided interactions between the constitutive symbols take place, i.e. RNA and peptides secondary and three-dimensional structures. The dimensions of the hypercube depend on the number of shape parameters selected to define the shape. Shape-space parameters thus determine the size and mathematical dimensions of the shape-space; its size is relative to observers’ ability to discriminate. The more parameters that are included in the description, the greater its size. With the introduction of functional considerations, discrimination can be made good enough so as to obtain molecular recognition of antigen-antibody and enzyme - substrate binding. So, the construction of shape-space is aimed at identifying a minimum set of parameters that are able to discriminate functional interactions (or operative size). What are then the criteria for parameter definition? As shown by Perelson the dimensionality of shape-space for molecules like antibodies and proteins can be as low as 6 or 8 (x, y, z geometrical axes, molecular weight, charge, dipolar momentum, hydrophobicity, etc.), (Perelson, 1988), (Kauffman, 1993).

These representations of informational spaces enables us to visualize the concept of information as equal to: I = Hmax - Hobs, in which I always increases (Brooks and Wiley, 1988:35-52). Both sequence and shape-space, when applied to the realm of DNA and proteins present their own Hmax and Hobs values. For the space of digitally encoded information (sequence space), Hmax represents the realm of the possible, not necessarily attainable and therefore astronomically big, whereas Hobs is just a minute subregion of functionally selected ones. Conversely, for the space of analog functional forms (shape-space), Hmax is very little in comparison with sequence space, and thence it is attainable. Hobs correspond to actually existing shapes and is almost filled. For shapes, Hmax is near to completion because of the high convergence that appears in local shapes. Hobs for sequences is far greater in absolute values than Hobs for shapes, because of the high degeneracy and existence of neutral networks in sequence space (‘neutrality’ is conceived as the set of variants that still encode the same structure) (Schuster, 1997), (Blastolla, 1999), (Andrade, 2000).

Since entropic expansion takes place in informational natural systems that have code duality, the result must be represented both in digital (sequence) and in analog (shape) informational spaces. Notwithstanding, it must be remarked that the correspondences that may be inferred between them are not free in terms of energy, for such correspondence requires permanent ‘work-actions’ executed by semiotic agents in order to keep them coupled within specific surrounding conditions that provide their context.

3.1 Bijective: ‘one sequence - one structure’ mapping

A. There might be a moderate tendency towards bijectivity as the system approaches equilibrium, if all the information contained in the digital sequence were required for structure determination. Then, bijectivity would be attainable at the point where K(seq:str) mutual or shared information between sequence and shape is maximum, then Kseq= Kstr. It would be a case not only of total determination of structure by sequence, but also a situation in which all sequence information is used to specify the structure.

B. Also, bijectivity becomes evident for an external observer only when highly

specific molecular structural parameters are included for

the sake of an objective and high resolution description, giving away

the notion of functionality, as is done in most quantum mechanical

approaches (Bermudez, et al., 1999). This approach is bound to fail since there

is a limit imposed by Heisenberg indeterminacy principle on the degree of

precision that can be achieved.

C. In the real world these two spaces would show a moderate tendency towards bijectivity only for particular strings that are poorly represented.

3.2 Non-bijective: ‘many sequences one structure and ‘one sequence many structures’ mappings

Despite the classical tendency to emphasize in mechanical one- to-one correlations, the real existent world shows a non-bijective mapping, as can be deduced from sequence and shape mapping for RNA and proteins. The following reasons support this viewpoint:

A. The specificity that concerns inner natural observers, is the one that is enough to assure molecular functionality or recognition. Molecular recognition can be achieved with some degree of looseness as put forward in the shape-space concept (Perelson, 1988, Kauffman, 1994).

B. Evolution takes place far from equilibrium, so that sequence and structure mutual information K(sequence:structure) increases without reaching a theoretical maximum value because: i) Not all information contained in the linear sequence may be needed for structure specification. ii) Some information not contained in the linear sequence may be required for structure specification; that is to say there are some interactions with external referents that cannot be incorporated into the linear sequence.

C. There is empirical evidence with RNA (Schuster, 1997), peptides and proteins (Kauffman, 1993). This case has shown that there is a wide spread network of sequences that may cover the sequence space in great extension and still correspond with the same shape for RNA and peptides. The non-bijective mapping of sequence space into shape-space elaborated by Schuster (1997) for RNA molecules of 100-nucleotide length shows that billions of linear sequences can satisfy one shape.

D. Furthermore, it is inferred that from any random RNA sequence the entire shape- space can be attained by confining the search to a few mutational steps that cover only a minimal defined subregion of the whole sequence space. Thus, any random subregion of the sequence space can encode all possible forms and shapes that for RNA molecules of 100 nucleotides length amount to 7x1023.

While the space of possible forms is nearly complete in the real world of molecules, the space of existing sequences is astronomically little and restricted in relation to the space of all possible sequences. This latter assessment assumes that we are dealing with sequence spaces that throughout evolution keep their dimensionality constant, because the molecular variants are searched within the same restriction length. However, during the evolution of nature the search of molecular variants takes place not only by random expansion within a fixed length; but also by increasing length of the molecules, so that the dimensionality of sequence space has also increased. Nonetheless, in all instances the number of functional shapes is always lagging far behind the number of possible linear sequences. At the level of primary sequences, the biosphere seems to have explored only a minute astronomical fraction of the total possible sequences, whereas the space of all possible shapes is almost completed. If evolution is concerned only with the expansion of the realm of actually existing sequences, it has hardly begun its task, conversely, if its aim is to produce all functional possible shapes, it has long been near to completion of that task. The point is that life is not just the random sorting of sequences but the finding of functional and active shapes, and there are prime possibilities for obtaining them at the molecular level. Moreover, the filling of the shape-space drives evolution to higher levels of organization in spite of the fact that the exploration of sequence space is confined to a minute region. For example, molecular diversity in high level organisms endows them with a universal molecular tool-kit of an estimated magnitude between 108 to 1011 for any enzymatic reaction or antigen recognition (Kauffman, 1993). Any potential substrate or antigen will be recognized (Perelson, 1988), (Segel, L.A. and Perelson, A. 1988). If evolution of functional forms is close to saturation, the vast majority of molecular variants is neutral and corresponds to already existing shapes. Therefore, function can be assimilated to a degenerate set of numerous linear series of symbols.

The mapping of many sequences to one shape, shows a characteristic of degeneracy given by the existence of neutral paths. However, this mapping also exhibits a holographic correspondence where each minuscule part of the world of semiotic genetic descriptions holds within it the makings of the entire whole world of forms (see figure 1). The mapping of living cells’ words or proteins and their corresponding genetic descriptions, for a given determinate length, is expected to show that the number of linear sequences that satisfy one form is much larger than it is for RNA. The following data (see table 1) are only tentative estimations based on length restriction of 100 nucleotides for RNA and 100 aminoacids for proteins (Schuster, 1997) (Kauffman, 1993), (Perelson, 1988), (Segel and Perelson. 1988).

.---------------------------------------------------------------------------------------------

Table 1

Estimated sizes of

sequence and shape spaces for RNA and Proteins

|

|

Size Sequence Space |

Size Shape Space |

Ratio Sequence: Shape |

|

Protein |

20100 = 10130 |

1011 |

10119 |

|

RNA |

4100 = 1060 |

7 x 1023 |

1.4 x 1036 |

These figures in Table 1 agree with the fact that the probability that a mutation is neutral for protein genes is as high as 70% (Tiana, 1998), and are in agreement with the existence of neutral networks that traverse sequence space (Blastolla, 1999).

I find the previous conclusion as a very telling argument that supports the need to abandon any digital or DNA centered view that disregards the role of the analog information. A more integral and comprehensive perspective is given by form where the two components of information, digital and analog, stand on equal footing, and their underlying unity is highlighted by the permanent agency of the natural system. The importance of the analog component of information was made manifest by Root-Berstein and Dillon (1997), when they explained how the reciprocal fit between local shapes is responsible for non-random interactions between molecules. This type of complementary interaction drives evolution in a non-random way towards higher degrees of organization.

4 THE MAPPING DIGITAL (SEQUENCE) - ANALOG (SHAPE) SPACE AND PEIRCE’S CATEGORIES

The "holographic - degenerate"[2]

encoding pattern (Andrade, 2000) becomes an example of how the three Peircian manners of being interlock with each

other. The possible world can be imagined or discovered as one realizes the

mathematical space in which all possible mutations can be located, and though

it does not have physical existence as such, it helps to map the diffusion of

variations, as some spots can be attained by random  exploration.

The possible world (Firstness) is represented as the maximum attainable volumes

of these spaces. The actual filling of these spaces represents the existing

world of organized patterns (Secondness). The difference between the possible

and the real (how much is left to be filled) appears as a condition of far from

equilibrium self-organizing evolution (Thirdness).

exploration.

The possible world (Firstness) is represented as the maximum attainable volumes

of these spaces. The actual filling of these spaces represents the existing

world of organized patterns (Secondness). The difference between the possible

and the real (how much is left to be filled) appears as a condition of far from

equilibrium self-organizing evolution (Thirdness).

It is my tenet that the convergence of shapes is associated with ever increasing difference between Hmax (possible total degeneracy) and Hobs (actual degeneracy) in the sequence space, and that the relation between sequence and shape spaces is causally connected by semiotic agency. Equally, functional shapes are trapped in a partial subregion of the corresponding neutral network; and cyclically tend to traverse the same subregion due to higher order restrictions - so that a greater part of the sequence space remains unattainable.

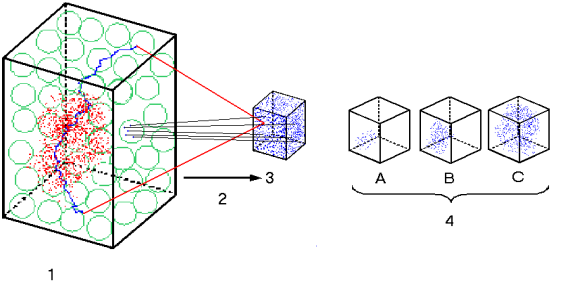

Figure 1: see legend below.

In order to indicate how the Peircian categories overlap and interlock one to another, the following decomposition of each component of the triad is presented below (see Figure 1).

1. Digital Informational Space (DIS) or Sequence Space. World of all digitally encoded genetic descriptions that can be imagined by permutation of basic symbols. Actually existing genetic descriptions are represented as a hazy cloud of scattered red dots. The blue path stands for one neutral path or the set of sequences that satisfies one shape. It is also shown how from one green ball (set of neighboring mutants attainable from any sequence) all possible shapes in Analog Informational space (AIS) can be attained. DIS is very far from saturation, and equilibrium within this volume cannot be physically attained.

2. There cannot be a bijective one to one correspondence between elements of DIS and elements of AIS. The third component that accounts for this mapping, the space of work-actions executed by semiotic agent is not shown.

3. Analog Informational Space (AIS) or Shape Space. World of all possible stable conformations that can be attained. The components of AIS are responsible for coupling with external referents, thus, providing meaning, functionality and semantics.

4. Entropic

expansion in AIS shows a tendency towards saturation.

4.1 Digital informational space (DIS)

Firstness:

This space represents the world of all possible digital texts (series of sequences of symbols) produced as permutation of basic symbols, as in the Borges' library of Babel. It is a space of full potentiality in which all possible digital records are located as such, interaction-free. It thus becomes a heuristic model that helps to comprehend the possible but not necessarily the actually existing in the experienced world. The texts found are only virtual and cannot be inferred from any other informational source. The exploration of this space occurs by random expansion or drift, as it is completely indeterminate. This space is virtually empty, since it is full of potentiality.

Secondness:

Despite the astronomical size of DIS, a minute region of localized dots can be identified. They represent actually existing digital records, some of which correspond to formalizable strings subject to manipulation by syntactic rules and are carriers of meaning (shortened descriptions). In this case, the compressed records correspond to digitally encoded information and make part of the real world; for example, the genetic recorded information in DNA that constitutes the genotype and determine protein linear sequences. By contrast, the majority of the existing series of symbols may correspond to uncompressible series, and to series yet to be compressed, i.e. non-coding DNA, or series whose semantic content has not been, or cannot be elucidated.

Thirdness:

Actually occupied dots are the result of semiotic agency, since restriction and selection must have acted to retain few among a panoply of preexisting possibilities. The semantic content that can be attributed to some sequences has been acquired due to Thirdness; in like manner Thirdness is also responsible of producing the "yet to be compressed" and uncompressible sequences that lack semantic content at all. Inasmuch as this space harbors sequences of symbols both with and without semantic content, it is seen as the room available for effective exploration of new series of symbols as expansion continues. This random exploration is nonetheless driven by the principle of entropy dissipation as a final cause.

4.2 Analog informational space (AIS)

Firstness:

This space represents the world of all possible analog records that are potentially responsible for interactions with external referents. It is the ground of latent interactions with external referents. This space is also the source of feeling and sensation, as primary qualities attributable to forms. The key point is that Peirce’s qualitative aspect of Firstness is, thus, explained as part of the potentiality inherent in analog information.

Secondness:

The deterministic aspect of analog information is made apparent through the structures that have become the seat of real interactions with external referents. It thus conveys the experience of the real, regardless of whether the real was logically expected or not. This space helps comprehend actually existing forms as realizations of the possible, for occupied dots represent actually existing forms that convey semantics and meaning. An example is protein folded structures and the phenotypes that are dependent on linear sequences and genotypes respectively. This category also points to the passivity and inertia of stable structures. One could also discriminate between: "internal Secondness" (that points to degenerate records shown as occupied dots in a neutral network in sequence space) and "external Secondness" (that points to external facts and true actions of one thing upon another represented in the space of ‘work-actions’).

Thirdness:

Thirdness is needed to explain the adaptive movement in this space that has to do with the fulfilment of an organization process. Analog information acts as efficient cause of formalized interactions and final cause of new formalizable digital texts by imposing selective restrictions. Structures or analog records are in themselves agents measuring standards and interacting devices. This category points to the activity and the dynamics inherent to structures.

4.3 Space of work-actions performed by semiotic agents

Firstness:

‘Work-actions’ performed by semiotic agents constitute an ever-flowing source of randomness or entropy dissipation that pays the cost of measuring and recording (Andrade, 1999). This increasing randomness induces the expansion in both DIS and AIS. This characteristic also stands for all side-effects of 'work-actions' that account for the relative lack of correspondence between DIS and AIS.

Secondness:

'Work-actions' performed by semiotic agents explain also the appearance of definite encoding and recording of interactions that lead to structure determination. They represents also all kind of actions that promote stabilization of both digital and analog records. This characteristic accounts for the observed correspondences between DIS and AIS, since, some analog records have become ever more dependent on and predictable from their digital counterpart as their mutual informational content K(digital:analog) increases.

Thirdness:

This is the space of functions, inasmuch as they are defined as particular activities that relate to the whole network of activities. These actions make possible the process from First to Second. The concept of 'work-actions' executed by semiotic agents explains why there cannot be an absolute Third. Thirdness is best represented as the cognitive activity that mediates between sequence and shape spaces. Semiotic agents incarnate the tendency to take habits by fusing together the possible (digital) and the real (analog). Quoting Peirce:

Nature herself often supplies the place of the intention of a rational agent in making a Thirdness genuine and not merely accidental.... How does nature do this? By virtue of an intelligible law according to which she acts.... It is a generalizing tendency, it causes actions in the future to follow some generalizations of past actions; and this tendency itself is some thing capable of similar generalizations; and thus it is itself generative (Peirce, C.P. 1.409).

There are two types of 'work-actions': Metabolic (capture, synthesis, degradation, and transfer) and Reproductive (copy, repair, transcription, translation, and permutation). According to Wächterhäuser (1997) metabolic operations are prior to Reproductive operations.

The relation between the digital and analog

informational spaces is mediated by Thirdness and refutes the "one to

one" mechanical deterministic correlations originally suggested by the

central dogma. This mapping is a real rupture with the view of the central

dogma because it includes all possible relations, cyclic processes,

flexibility, expansion, irreversibility, that can be conceived in the molecular

realm and provides some general laws about the organization of nature. The influence of noise is very critical to this

mapping. Noise affects the very process of information conversion from digital

to analog (through translation, folding). Frictions and restrictions act all

along in an unpredictable way but because we are dealing with a closed loop and

not only with information contained in a linear sequence, perturbations

originating in the activity of natural semiotic agents affect the very process

of information conversion. So the activity of natural semiotic agents

contributes through semiosis to structural determination.

The central dogma of molecular biology obscures these complex relationships, for it is only concerned with a one way, unidirectional, one- to-one mapping. Therefore, it is possible to conclude that all possible digitally encoded worlds, bizarre as they may be, have a potential meaning, but most of them remain meaningless unless their corresponding shape or analog version is selected. Linear monotonous versions that do not fold in stable shapes, cannot be selected since selection operates within a defined semantic context given by the network of interacting forms or a higher order form. Thus, one is bound to assert that what matters for life is not a random sorting of sequences but the finding of functional and active shapes and it goes to great odds to obtain them! Therefore, we can anticipate the remarkable conclusion that evolution is tied to greater extent to forms, as mapped in AIS, and less to random permutation of symbols in DIS.

The following reasons explain how Thirdness permits us to recover the idea of continuity:

1. One step variants can be identified in either space.

2. The space of 'work-actions' shows that one can define neighboring functions, or function that can be accessed from an actually existing one, by slightly modifying the structure. In the case of proteins, Kauffman (1993: 166-169) has reported neighboring catalytic functions.

Given the continuity of different forms in their digital representations, form becomes very sensitive to noise, so same linear sequences in different circumstances (i.e. same molecules in different tissues) yield different structures responsible for different function. Semiotic agents produce and handle this noise in order to favour a definite coding, though flexibility is never lost.

The above discussion permits us to deal with the question about whether forms are irreducible to each other. While forms might seem to be irreducible to each other, the idea of contiguity[3] of forms and function permits us to envisage a possible reduction of one to another, although a set of few primary elementary forms could not in principle be identified. Furthermore, while some forms may be contiguous in sequence as well in shape-space, it remains to be solved how one can estimate the minimal distance between any two different forms in sequence space. I suggest that the holographic degenerate

mapping forbids a definitive answer to this problem. One

can take, form A and B in AIS, then

go to their preferred digital counterpart and measure the "average"

distance between records A and B in sequence space. However, this

approach would be insufficient given the random dispersion of neutral networks

in sequence space. To conclude, one can never know if estimated distance is

minimum or not, for it is as estimating the average distance by taking

pairs of any two points provided, each one belonged to a different neutral

network.

5

THE SEMIOSIS OF RANDOM SEQUENCE OF SYMBOLS: CHAITIN AND

PEIRCE

In order to develop Chaitin's concepts of algorithmic randomness in Peircian terms, a two-level hierarchy is required. This will permit us to distinguish between random uncompressible series that has been provided a priori to the system, and a random series that appear after execution of a compressing algorithm performed by the higher level system. Therefore, in Peirce's terms (1) Firstness would correspond to random uncompressed a priori sectors, some of which have the potential of being compressed.

A priori random series are independent of observer's formal systems complexity. However, when they are relative to observer's discriminating activity we have Secondness. Then, (2) Secondness is represented within the actually compressed sectors or the existing definite and tangible forms. These regions appear as random from a non-hierarchical ontology, but they stand for ordered and coupled entities as perceived from the higher level. Thus, Secondness is the inter-phase where inner randomness and observer's semiotic actions (in place of external restrictions) meet. We may note that maximal compression cannot be attained because of the ensuing susceptibility to higher risks by mutations. So some degree of constitutive redundancy is expected. (3) Thirdness would be equivalent to the organizing and formative causes, which can be understood as the tendency to find regularities and patterns or to elaborate a compressed description[4].

In the evolution of binary series in time, one might find the following cases:

A. (Uncompressible) t0 þ (Uncompressible) t1: a series remains uncompressible regardless of the formal system used to study it.

B. (Uncompressible) t0 þ (Compressible) t1: an uncompressed series becomes compressible when either it has been tackled with a more complex formal system, or it has been modified by mutation in such a way that the previous less complex formal system can account for it.

C. (Compressible) t1 þ (Compressed) t2 : a compressible series of symbols becomes an actually compressed description after execution of the appropriate algorithm.

It is well known since Chaitin's work, that a random series of symbols (0,1) cannot be compressed (Chaitin, 1969), but experience shows that an appreciable number of descriptions can be shortened, by slightly modifying them in interaction with the observer. Furthermore after a few directed variations, the degree of compression increases in the region where those directed variations took place. The subjective goals of the observers, and their inner drive to interact, make them active agents responsible for adjustments that permit the discovery or actualization of previously hidden patterns. Reciprocal fit is not provided by a type of Leibniz pre-established harmony but is instead developed within an interactive process where the observer's intention to 'find a match' plays a major role. The observer's intentions are driven by the tendency to grasp available energy, while enabling both the conservation and transfer of energy that might result in perturbation of observers' inner patterns or measuring devices. Thus, only by including the transient character of both the action of measurement and the created records, the internal observers' dynamics could be modeled in terms of Chaitin complexity approach. Directed transformations due to the active agency performed by the observer must be paid for by dissipating entropy that might affect some existing records.

There are always sectors that remain both uncompressed and compressed. The former will remain so, as long as this sector does not provoke an observer who can direct the compression, and the latter will remain so, only as long as semiotic agency or inner selective activity goes on. As energy flows, there is a tendency to compress information in the records paid for by entropy increases. These entropy increases become manifest as dissipating perturbations and/or partial erasing of records that appear in a variety of forms, from single base perturbation to the deletion of larger fragments and to the accumulation of mutations. This partial record erasure is the only way to relieve structural constraints on the evolving systems - as exemplified within the events of the Cambrian explosion.

The creation of new links does not always involve the loosening of former ones, since any transmission of perturbation is most likely to affect uncoupled motifs, and they represent a majority. That is, non-coding DNA regions play a passive role in buffering against mutations (Vinogradov, 1988). The more intensive the ability to take advantage of random fluctuations, or the satisfaction of subjective goals, the higher the transmitted perturbation. In other words, the more pressure that is exerted upon a solid mould the higher the perturbation and unexpected fractures that affect the mould. Similarly, let us remember that each level of organization exists within a defined threshold of energy barriers and that if the work executed is not sufficient to drive the system out of its well of stability, the perturbed system will remain stable and will not breakdown into component subsystems. Conversely, to move upward in the organized hierarchy requires selective work. However, as complexity increases, the system will also develop a set of algorithmic rules that may be used to compress previous uncompressed domains. Therefore, semiosis becomes operative as a physical dynamics that is restricted only by thermodynamic considerations and by the presence of actually existing forms.

Compressions elucidate the intelligibility that was hidden in the former uncompressed state. The basic assumption of all science is that the universe is ordered and comprehensible. In a similar vein, it can be said that this order within the universe is compatible with the processes of life for at least one world, our planet earth, exists in which life has emerged. Therefore, the world must have a property analogous to algorithmic compressibility for life to exist. We may conclude that life forms are adapted to the discovery of suitable compressions. Also, that living forms possess embodied algorithmic compressions. The point, however, is that suitable compressions are only those that fit a defined shape. Shapes permit the recovery and storage of compressed descriptions. Digital descriptions are compressed within their actions as they either collapse or materialize in functional shapes.

The transition from analogic (holistic three dimensional pattern recognition) to digital coding (linear) entails a loss of information. This is equivalent to the passage from an image (perception) to a statement (cognition). Enzyme - substrate recognition is holistic; however in spite of its dependence on highly specific sequence domains, such recognition does not completely eliminate non-relevant information in terms of sequence specificity, because this type of information is required as structural support of the active site. However, shortening of descriptions take place in the transition from analog to digital (Dretske, 1987), since context-dependent information can be dispensed with. The adequate shape that is encoded in a compressed description is all that is required for the activity of a living system, namely, the establishment of interactions that permit the continuation of the measuring and recording process.

On the other hand, as discussed above, the mapping of digital semiotic descriptions into analog semantic versions (shapes) shows that shapes maximize the amount of information contained in the space of sequences, and that the relation between these two sets cannot be formalized completely.

6 EMERGENCE OF NEW LEVELS OF ORGANIZATION

In consequence with the above discussion, the hierarchical organization of nature must be driven by the tendency to fill rapidly the AIS. In opposition with the accepted view, there is no need whatsoever to try all permutations compatible with the sequence space. Most hierarchical theories accept that levels emerge on top of the adjacent lower level; while this is only part of the truth, one must be reminded that the higher order or ecological (analog) level is always present, given the continuity created by the interactions between existing units. This higher level however is not static and is in permanent process of ‘becoming’ by including newly emerged entities from the lower levels.

Let "Lo" stand for the ground level for mere practical reasons that are not necessarily ontological. It must present a dual nature - digital (discontinuous) and analog (continuous). As the network of interactions corresponding to this ground level "crystallizes" its corresponding AIS starts to be explored.

1. As (Lo) AIS approaches saturation a

new level (L1) starts to appear.

2. The newly emerging level begins to unfold into

two ever more differentiated instances: digital (L1digital) and

analog (L1analog) that are kept together by semiotic agent actions

that hold K(digital:analog) at an adequate value enough to maintain

the cohesion of the system.

3. As this tendency consolidates and the new AIS

(L1analog) approaches saturation, new levels (L2

and L3) can emerge in between. Entropic expansion cannot stop

just because AIS is filled up; thence a new level is created that permits the

persistence of entropic expansion. New forms dissipate entropy and higher level

forms dissipate even higher amounts of entropy.

4. Thus, again newly emerging levels begin to

unfold into ever more differentiated instances: digital (L2digital)

- analog (L2analog), and digital (L3digital) - analog (L3analog).

5. If a situation is reached in which AIS is not

near saturation, the emergence of new levels will not take place.

For a new level to appear two conditions are required:

A. Saturation of the shape-space (AIS) corresponding to the lower adjacent level.

B. Decrease of mutual information between digital and analog records in the former level, so that the presence of the emerging new level is needed in order to keep the cohesion of the organized system.

Every level of organization, thus, has a dual digital-analog information. Those hierarchies of analog systems in which the digital component are not evident, such as ecological hierarchies Eldredge (1985), must also posses a digital counterpart that is made apparent when the time dimension is considered. The sequence of events along a cyclical loop of transformations is amenable to a digital representation as long as some basic units (symbols) are defined. In any instance, the saturation of AIS drives evolution towards higher levels of organizations of inclusion hierarchies. Entropy dissipated to the outside by the lower levels becomes internally dissipated entropy for the higher order level, though internal entropy production by the higher order system also results from its behavior and dynamics.

In this exploratory manner one can in the near future open avenues for the understanding of the hierarchical organization of nature where the basic unity of information processing is best accounted by the notion of form.

ACKNOWLEDGEMENTS

I am grateful to Peter Harries-Jones for thorough reading

of the manuscript and for several helpful comments and suggestions.

REFERENCES

Andrade, E. 1999. Maxwell demons and Natural selection: a semiotic approach to

evolutionary biology in Thomas A. Sebeok, Jesper Hoffmeyer and Claus Emmeche eds. (Special Issue “Biosemiotics”) Semiotica 127: (1/4): 133-149.

%%%%%%%%2000. From External to Internal Measurement: a form theory approach to

evolution. BioSystems 57 (2): 49 - 62.

Aristotle. 1998. Metafísica. tr. Francisco Larroyo. Mexico: Ed. Porrúa.

%%%%%%%%1992. Physics (Book I and II). tr. William Charlton. Oxford: Clarendon Press.

Bateson, Gregory. 1979. Mind and Nature. A necessary unity. New York: Bantam

Books.

Bateson, Gregory and Bateson, Mary Catherine. 1987. Angels Fear: Towards and

Epistemology of the Sacred. New York: Macmillan Publishing

Baldwin, J.M. 1896. A new factor in evolution. American Naturalist 30: 441- 451.

Bermudez, C., E. Daza. and E. Andrade. 1999.

Characterization and Comparison of

Escherichia coli: transfer RNA isoaceptors by graph theory based on secondary

structure. Journal of Theoretical Biology 197 (2): 193-205.

Blastolla, U., E. Roman and M. Vendruscolo. 1999. Neutral Evolution of Model

Proteins: diffusion in sequence space and

overdispersion. Journal Theoretical

Biology 200: 49-64.

Brooks, D.R. and E.O. Wiley. 1988. Evolution as Entropy: Toward a Unified Theory of

Biology. 2nd ed. Chicago and London: University of Chicago Press.

Buffon, G.L.L. 1976.

Histoire des Animaux., quoted in G. Canguilhem. El Conocimiento

de la Vida. Barcelona, Editorial Anagrama p: 63.

Chaitin,G.J. 1969. On the length of programs for computing finite binary sequences :

statistical considerations. Journal of the Association for Computing Machinery 16: 145-

159.

Dretske, F.I. 1987. Conocimiento e Información. Barcelona: Salvat Editores.

Eigen, M. 1986. The Physics of Molecular Evolution. Chemica Scripta 26B: 13-26.

Eldredge, N. 1985. Unfinished Synthesis: Biological Hierarchies

and Modern

Evolutionary Thought. New York. Oxford University Press.

Hamming, R.W. 1950. Error

detector and error correcting codes. The

Bell System

Technical Journal 26:

147-160.

Hoffmeyer, J. and C. Emmeche.

1991. Code-Duality and the Semiotics of Nature.

in Myrdene Anderson and Floyd

Merrel eds. On Semiotics of Modeling. pp.117-166.

New

York: Mouton de Gruyter.

Jacob, F. 1982. The Logic of Life: a History of Heredity. tr. B.E. Spillmann. NewYork:

Pantheon Books.

Kant, I. [1928]. 1964. The Critique of Judgement. tr. James Creed Meredith.

Oxford: Clarendon Press.

Kauffman, S. 1993. The Origins of Order: Self-organization and Selection in Evolution.

New York and Oxford: Oxford University Press.

Matsuno, K. and S.N. Salthe. 1995. Global Idealism/local materialism. Biology Philosophy 10: 309-337.

Maynard-Smith, J. 1970. Natural Selection and the Concept of Protein Space. Nature

225: 563-564.

Olby, R. 1994. The Path to the Double Helix. New York: Dover Publications.

Peirce, Charles S.

1931-1958. Collected Papers of Charles

Sanders Peirce, 8 vols. eds.

Charles Hartshorne,

Paul Weiss, and A.W. Burks. Cambridge, MA: Harvard University

Press.

%%%%%%%%

[1965 ]. 1987. Collected Papers.

Cambridge, MA: Belknap Press. Obra

Lógico Semiótica tr. and ed. Armando Sercovich. Madrid: Taurus Ediciones.

Perelson, A.S. 1988. Toward a realistic model of the immune system. in A.S. Perelson. ed.

Theoretical Immunology II: Santa Fe Institute Studies in the Sciences of Complexity.

Pp: 377 - 401. Reading, Mass. Addison-Wesley.

Root-Berstein, R.S. and P.F. Dillon 1997. Molecular Complementarity I: the

complementarity theory of the origin and evolution of life. Journal Theoretical Biology.

188: 447-479.

Salthe, S.N. 1999. Energy, Development, and Semiosis. in

Edwina Taborsky ed.

Semiosis, Evolution, Energy: Towards a Reconceptualization of the Sign. Pp: 245-261.

Aachen: Shaker Verlag.

Schuster, P. 1997. Extended molecular evolutionary biology: artificial life bridging the gap between chemistry and biology. in Christopher Langton ed. Artificial Life. The

MIT Press. Cambridge, Mass. pp: 48-56.

Schuster, P. 1997. Extended molecular evolutionary biology : Artificial life bridging the gap between chemistry and biology. En : Artificial Life. Edited by Christopher Langton. pp: 48-56

Segel, L.A. and Perelson, A.S. 1988. Computations in shape space: A new approach to

immune network theory. in A.S. Perelson, ed. Theoretical Immunology II: Santa Fe

Institute Studies in the Sciences of Complexity. Pp: 321-343. CA, Addison-Wesley

Taborsky, Edwina. 2001. The Internal and the External Semiosic Properties of Reality. Semiosis, Evolution, Energy, Development.1(1).

Available on Internet. http://www.lib/utoronto.ca/see

Thompson, D'Arcy. 1961. On Growth and Form, Abridged. ed. J.T. Bonner.

Cambridge: Cambridge University Press.

Tiana, G.; Broglia,, R. A., Roman, H. E.; Vigezzi, E. and Shakhnovich, E.I. 1998.

Folding and misfolding of designed protein-like chains

with mutations. Journal

Chemical Physics 108: 757-761.

Vinogradov, A.E. 1998. Buffering: a passive-homestasis

role for redundant DNA.

Journal Theoretical Biology 193: 197-199.

Wächterhäuser,

G. 1997. Methodology and the origin of life. Journal of Theoretical

Biology 187 (4): 491.

Whitehead, A.N. [1929] 1969. Process and Reality. Toronto: Macmillan.