ABSTRACT

In 1991 Claus Emmeche and I suggested that ‘the chain of events which sets life apart from non-life.... needs at least two codes: one code for action (behaviour) and one code for memory—the very first of these codes necessarily must be analog and the second very probably must be digital.’ This principle of code-duality played a major role in our initial ideas on biosemiotics. The paper looks back to the origin of the idea of code-duality and confronts some of the ambiguities raised by the concept.

INTRODUCTION

While preparing for this lecture I searched back through my earlier writings and was surprised to see the extent to which the idea of code duality grew out of reflections on the historical relation between nature and culture. As a biochemist feeling concern for the ways my discipline was becoming increasingly imprinted in technology I had engaged myself in questions of ecological history (this work was published in Danish as Hoffmeyer, 1982). One major outcome of this work was the understanding of biological techniques as a kind of information technique, i.e. a series of techniques for the storing and processing of information of biological origin.[1] Just as in mental phenomena where computers took advantage of embedded powers derived from putting intellectual problems into algorithmic schemes and thus digital form, so genetic technology took advantage of the combinatorial powers inherent in nature's major digital code, the genetic code.

While this rather obvious idea led many people to believe in computers as ideal models for living systems, Gregory Bateson's subtle writings may have helped me to escape such a shallow parallelism. For in Bateson's understanding life, natural or cultural, depends on the interactions between multiple kinds of codings, and basically he distinguishes two kinds of codings, those based on a graded response (e.g. the governor in a steam engine) and those based on on-off thresholds (e.g., the thermostat): "This is the dichotomy between analogic systems (those that vary continuously and in step with magnitudes in the trigger event) and digital systems (those that have the on-off characteristic)" (Bateson, 1979: 123).

I was clearly inspired by these ideas when in 1985 I wrote an essay for a Danish newspaper arguing for a deep parallelism between ‘the workings’ of natural and cultural systems:

The point of an egg is that the hen here has to go through a phase in which it exists mostly as a digital code. The coherence of origin thus, paradoxically, is assured by a punctuation of bodily continuity. It is tempting to see the egg as a kind of book about the hen. We might call it "Make-a-hen"...

The digital version of the hen opens the door for certain ‘experiments’ that would not otherwise have been possible. For instance the experiment of coupling half a hen and half a cock and see what becomes of it. Such things cannot be done with real hens and real cocks, for they have to obey the limitations of freedom caused by natural law. In the digital phase however these limitations are open to experimentation.

Here again the comparison to the freedom of books is not far away. Thus in books everything may happen: Friday may meet Robinson on a deserted island; the old Maria Grubbe[2] might eventually meet herself while still a young woman; Tycho Brahe might for that matter run upon Jens Otto Krag.[3] Sexual reproduction and books resemble each other in that they translate reality into a code, which may be processed in safety from the pedantic forefinger of natural law. For this to bear any fruit, the coding must of course potentially become retranslated back to enable changes of reality. This is what is called evolution. Systems which have the ability to develop through such chains of coding have something in common, which Bateson thought we should call 'mind' (Hoffmeyer, 1985).

Thus the essay suggested a deep and in fact internal relation between the ‘coding chains’ of culture and nature, and in both cases the ascription of primacy to either the digital or analogic codings leads to unjustified reductionism:

The hen is in all of her behavior, her physiology and her anatomy, as much a message of an eventual egg as the egg is a message of an eventual hen. As a hen the egg is just figuring as an analog coded physical continuous and sensually appealing message. It was exactly this dimension of nature, its swarming plenitude of messages, which natural science eliminated by taking dead nature (the nature of physics) as a model for living nature (discussed in Hoffmeyer 1984)). Logically enough we thereby have ended up being threatened by a ‘silent spring.’

The historical consequence of making dead nature the model of nature at large was that all the talking—and all mindfulness—went on exclusively in the cultural sphere. As a result we now suffer the divided existence of the two great cultures, the humanities and the scientific-technological culture. One of them only knows about the physical dimension of the world, the other only knows about the world of language. The barrier between reality and language thus derived as a logical consequence of our silencing of nature. And although the two life-worlds consider each other with reciprocal suspicion or even contempt, they are indeed ideal partners: For they are equally anxious to maintain that dichotomy of nature and culture which makes sure that technical reason can proceed forward unhindered by humanistic concerns—and vice versa. ... This is why the natural scientific life-world now finds itself on the edge of an intolerable relativism (since knowledge of nature is necessarily formulated in language this knowledge is so to speak imprisoned in the wrong life-world—and which measure of validation can it find there?), just as the humanistic life-world is on the edge of an intolerable disbelief: postmodernism.

This is why I want to invoke a new kind of curiosity, a curiosity directing its attention towards, what we might call the wonder of the code and which does not put that wonder aside by the enclosure of the codes into one or the other state space or life-world. For it is the nature of the code to point outside of its own mode of existence—from the continuous to the discontinuous message, from the physical and therefore law bound message to the more free message. And back again in an unending chain.

It is not my intention to claim that the ‘coding chains’ of life and culture are the same. But human beings participate in them both at the same time, and therefore we need to see how they are related—in terms of origin as well as in terms of functionality (Hoffmeyer 1985).

In 1985, however, I had still not freed myself from the metaphor of nature as a language:

Human evolution, and thereby the origin of the cultural chains of coding confronts us with the question of how a linguistic system could evolve into a metalinguistic system, i.e. to a system that can conceptualize its own 'languaging', its own life (ibid).

And in 1987, when the concept code-duality was finally introduced, it still retained a Saussurian linguistic tone:

Applying a semiotic perspective to nature, one is immediately struck by the fact that living systems exhibit what might be termed code-duality. The DNA may be seen as a digital-coded version of the organism, while the population may be seen as an analog-coded version of the species' gene-pool—or, as I prefer to call it, "the language of the species". (Thus to follow Saussure, the DNA of the single organism may be seen as 'parole', whereas the deep structure hidden in the total genetic message of the species could be seen as 'langue' (Saussure, 1916). This code-duality seems, in fact, to be the most remarkable thing which sets living systems apart from inanimate nature" (Hoffmeyer, 1987).

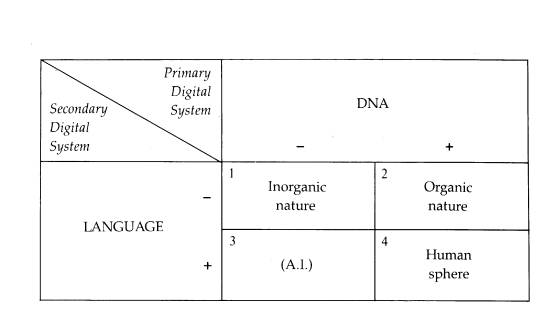

In conclusion one can say that the idea of code-duality was born out of an understanding of the modern IT-revolution—as in a way proceeded by an IT-revolution which had taken place 3.8 million years ago and consisted in the appearance on planet earth of living systems capable of exploring the combinatorial powers of the world's first digital code, the DNA code (Figure 1). In both cases the digital codes were tools for an intentionality, which would not itself be contained in a single code, but which in fact depended on the reshuffling of messages between analog and digital codes.

CODE DUALITY

For a number of reasons these early formulations of the concept was not very satisfactory, and it was only when Claus Emmeche and I undertook writing our joint paper from 1991, ‘Code-Duality and the Semiotics of Nature,’ that a more consistent idea of code-duality emerged. Here code-duality was derived from

Figure 1: Nature's double digital system. From (Hoffmeyer 1987)

considerations on the question of the origin of life. Beginning with Gregory Bateson's definition of information as ‘a difference which make a difference’ we reformulated the question of the origin of life as the question ‘how could systems capable of responding to differences in their surroundings arise in the middle of that which knows no difference’ (Hoffmeyer and Emmeche 1991). I think it might be informative to quote our answer to that question at full length:

To answer this question one has to be aware, that the notion of a system already implies some kind of distinction between what belongs and what does not belong to the system. We, of course, can make such a distinction, but we were not there at the time of the beginning of life—and neither was (per definition) any other kind of living system capable of selecting differences or distinctions in their surroundings. In a certain sense prebiotic systems did not exist—only three and a half billion years later we draw their outlines in our imagination. This of course might be said of everything pre-human. Our point is, however, that living systems did in fact exist (not only materially but as organized entities) before human minds 'created' their outlines.

Suppose that eventually a living system arose from the primordial soup—or wherever it was. Then we will have to ask: Who was the subject to whom the differences worked on by such a system should make a difference? If one admits at all, that living systems are information processing entities, then the only possible answer to this question is: the system itself is the subject. Therefore a living system must 'exist' for itself, and in this sense it is more than an imaginary invention of ours: For a system to be living, it must create itself, i.e. it must contain the distinctions necessary for its own identification as a system. Self-reference is the fundament on which life evolves, the most basal requirement. (This does not pertain to non-living systems: There is no reason for the hydrological cycle to know itself. Thus, rivers run downstream due to gravity, water evaporates due to the solar heat, nowhere does the system depend on self-recognition).

Another way to express this whole matter is to say that differences are not intelligible in the absence of a purpose[4]. If nothing matters matter is everything.

But what is the basis of this self-reference, and thus the basis of life? We shall suggest here that the central feature of living systems allowing for self-reference, and thus the ability to select and respond to differences in their surroundings, is code-duality, i.e. the ability of a system to represent itself in two different codes, one digital and one analog (Hoffmeyer, 1987). Symbolically this code-duality may be represented through the relation between the egg and the hen.

Self-reference clearly depends on some kind of redescription. The system must be able to construct a description of itself (Pattee, 1972; Pattee, 1977). This description furthermore must stay inactive in—or at least protected from—the life-process of the system, or else the description will change—and ultimately die with the system. In other words, the function of this description is to assure the identity of the system through time: The memory of the system. In all known living systems this description is made in the digital code of DNA (or RNA) and is eventually contributed to the germ cells.

We suggest, that it is by no means accidental that the code for memory is of a digital type. What should be specified through the memorized description is not the material details of the system, but only its structural relations in space and time. If such abstract specifications should be expressed through an analog code only very simple systems would be possible, and those would probably not survive.

(For a parallel: if human communication and memory depended solely on analog codes, e.g. the ability to mime, if in other words we did not posses the digital code of language, our cultural memory would be as short as that of chimpanzees—and the social structure accordingly simple).

Now, for the system to work the memorized description in the digital code must be translated to the physical 'reality' of the actual living system. For this translation (the developmental process) to take place the fertilized egg cell, or some equivalent cell, must be able to decipher the DNA-code as well as to follow its instructions in a given way. This need for the participation of cellular structure shows us that a sort of 'tacit knowledge' is present in the egg cell (Pattee, 1977; Polanyi, 1958). And the existence of this tacit knowledge hidden in the cellular organization must be presupposed in the DNA-description. Thus, the digital redescription is far from a total description.

The realization in space and time of the structural relations specified in the digital code defines what kind of differences in the surroundings the system will actually select and respond to. Through this realization a new phase is initiated, the phase of active life. One might say that in this phase, the 'analog phase', the message of the memory is expressed.

Eventually the system will survive long enough to pass on its own copy of the digitalized memory (or part of it) to a 'new generation'. Essentially this corresponds to a back-translation of the message to the digital form. But this is a process that takes on its significance only when seen at the level of the population. The population (rather than the single organism) passes on a message about conditions of life to the memory (the gene-pool). The population should be considered a code expressing a message. This code, however, necessarily is analog, since it has to interfere with the physical surroundings and thus must share with these surroundings the properties of physical extension and continuity.

The chain of events, which sets life apart from non-life, i.e. the unending chain of responses to selected differences, thus needs at least two codes: one code for action (behavior) and one code for memory—the first of these codes necessarily must be analog, and the second very probably must be digital.

Looking back it strikes me that the concept of code-duality was from the beginning linked to the idea of life as a chain of codings and recodings, i.e. life was not seen as an organismic property but rather as a property of chained codes. Or to say this in other words, the view of code-duality implies that the organism cannot be the privileged unit in biology and neither can the genome. Life is a semiotic process carried forward in time by the lineage in its interaction with changing environments.

INTERACTORS AND REPLICATORS

There is an interesting relationship between the idea of code-duality and Weismann's old doctrine of the separation between soma and germ. In the 1890s the German biologist August Weismann showed that the separation between those cell lines which were destined to become germ cells, and the cell lines destined to become somatic cells, takes place very early in embryogeny[5]. The implication of this finding was quite significant, since it meant that whatever occurs to an individual will not affect the progeny of that individual because there is no way it can be passed over to the germ cells. Thus Lamarckian inheritance, i.e. the inheritance of acquired characters was not possible.

Figure 2:Molecular weismannism. G = genome, S = somatic cells (body)

In the 1960s weismanism was sort of confirmed at the molecular level through the adoption of the so-called central dogma according to which the flow of information in a cell is unidirectional, i.e. from the DNA to the RNA and further on to the proteins. Since the proteins are the main constituents of the bodily machinery the central dogma implies that genetic information which has once become transformed to the machinery of the body has no way to return to the genome. The central dogma thus confirms the weismannian conception of the body as a kind of dead end in the evolutionary process as often depicted in a figure such as Figure 2.

It is no wonder that a theory which sees the organisms as causal dead ends in the evolutionary process would sooner or later give rise to the idea that the organisms are in fact just instruments for the strategies of the genes. This was the essence of the well-known theory of the selfish genes proposed by Richard Dawkins (Dawkins, 1976). In Dawkins' conception the individual organisms are nothing but survival machines or vehicles which serve the genes in their attempt at being carried on to the next generation. We thus get the distinction between vehicles and replicators as explained in a famous passage from his book:

What was to be the fate of the ancient replicators? . . . Now they swarm in huge colonies, safe inside gigantic lumbering robots, sealed off from the outside world, communicating with it by remote control. They are in you and me; they created us, body and mind; and their preservation is the ultimate rationale for our existence. Now they go by the name of genes, and we are their survival machines" (Dawkins, 1976: 21)

In all its pompous ambition this quote has managed to catch the fantasy of many theorists, and yet it calls for serious objections. First, one should remember that before the genes could create human beings they had themselves to be invented linguistically, which in fact did not happen until 1909 when the Danish biologist Wilhelm Johannsen coined the terms, genotype, phenotype and gene. This is not a joke, for a major problem with Dawkins' idea is his somewhat blurred conception of what a gene is. The most common meaning of a gene in molecular biology is ‘a reading sequence’—that is a sequence of nucleotides that is transcribed into one piece of messenger RNA that is either translated into protein or used directly in the metabolism of the cell. But what Dawkins' refers to as a gene is actually something quite different, namely an ‘arbitrarily chosen portion of the chromosome.’ But while such an understanding makes up for the replicative property of a gene it leaves the phenotypic effects of the gene totally unaccounted for. As Sterelny and Griffiths put it in their thorough analysis of gene selectionism "if genes are just arbitrary DNA sequences, then most of them will have no more systematic relation to the phenotype than an arbitrary string of letters has to the meaning of a book" (Sterelny and Griffiths, 1999: 79).

Let me in this context just dwell upon one aspect of Dawkins' idea, which strikes me as particularly contra-intuitive. The reason why DNA is so well suited to the role as a carrier of memory, i.e. as substrate for saving genetic instructions, which has worked well in ancestor organisms, is that under normal physiological conditions DNA is a very inert molecule. It is of course not a good idea to save memory in a substrate that is intended for action. And, as Harvard geneticist Richard Lewontin has pointed out ‘DNA makes nothing’ (Lewontin ,1992). In actual fact the DNA molecules are actively protected towards the vicissitudes of life by a range of enzymes taking care of eventual reparations. Rather, the coiled DNA helices are passively waiting inside the cell nucleus for proteins to arrive and cut them open, unwind their two strands and transcribe one of them into an RNA transcript, which then is transported outside the nucleus and further worked upon by a piece of extremely complicated protein machinery called a ribosome. Judged on this background it seems to be strangely at odds with biochemical reality to ascribe an active or even creative role to simple ‘portions of the chromosome.’ No portion of a chromosome is able to do anything interesting by it self, and most certainly not to replicate itself. Thus, in a decent manner of talking, genes might be called replicative units, but not replicators since this term implies the possession of some kind of agency, which genes clearly do not possess—no matter how you chose to define them

The American philosopher David Hull tried to escape the ascription of ontological primacy and causal efficiency to genes implicit in Dawkins vehicle-replicator distinction by suggesting the term ‘interactor’ instead of vehicle. Thus in Hull's terminology replicators are ‘entities that pass on their structure directly in replication’ while interactors are ‘entities that produce differential replication by means of directly interacting as cohesive wholes with their environments’ (Hull, 1981) cited from (Depew and Weber, 1995). For years this quibbling interactor-replicator distinction has managed to occupy centre stage in scholarly discussions on evolutionary theory. From the biosemiotic point of view presented here, however, this distinction implies a reification (or entification) of what should rightly be seen as spatio-temporal communicative surfaces. Rather than focusing on survival and competition among genes or organisms (replicators or interactors) code-duality directs our attention to those processes whereby new signification is created and exchanged. And thus towards the one doubtless directionality which characterizes organic evolution, the direction towards a world inhabited by systems possessing increasingly more semiotic freedom (Hoffmeyer, 1996).

THE NEUMANN-PATTEE THESIS

Another major precursor for the idea of code-duality was the American biophysicist Howard Pattee's distinction between a ‘linguistic’ and a ‘dynamic’ mode of complex systems (Pattee, 1977). Pattee explicitly refers as a source for this idea to Niels Bohr's discussion of the complementarity principle as pertaining to the phenomenon of life and also to the work of John von Neumann. In a recent paper Pattee quotes von Neumann saying:

We must always divide the world into two parts, the one being the observed system, the other the observer . . . . That this boundary can be pushed arbitrarily deeply into the interior of the body of the actual observer is the content of the principle of the psycho-physical parallelism—but this does not change the fact that in each method of description the boundary must be put somewhere, if the method is not to proceed vacuously. (von Neumann (1955), quoted in Pattee, 1997).

Pattee now explains von Neumann’s point in the following way:

Von Neumann defines a physical system S whose detailed behavior must follow from the fundamental laws of physics, since these laws describe all possible behaviors. But if the particular behavior of S is to be calculated we must measure the initial conditions of S by a measuring device M. Therefore, the essential function of measurement is to generate a computable symbol, usually a number, corresponding to some aspect of the physical system.

Now the measuring device also must certainly obey the laws of physics even in the process of measurement, so it is possible to correctly describe the measuring device by the laws of physics. One must then think of the system and measuring device together as just a larger physical system S' = (S + M). But then to predict anything about S' we must have a new measuring device to make new measurements of even more initial conditions. Obviously, this way of thinking gets us nowhere except an infinite regress.

The point is that the function of measurement cannot be achieved by a fundamental dynamical description of the measuring device, even though such a law-based description may be completely detailed and entirely correct. In other words, we can say correctly that a measuring device exists as nothing but a physical system, but to function as a measuring device it requires an observer's simplified description that is not derivable from the physical description. The observer must in effect choose what aspects of the physical system to ignore and invent those aspects that must be heeded. This selection process is a decision of the observer or organism and cannot be derived from the laws (Pattee, 1997).

Since not only human beings but living systems at large are fundamentally engaged in observations or measuring processes it follows that: "we must define an epistemic cut separating the world from the organism or observer. In other words, wherever it is applied, the concept of semantic information requires the separation of the knower and the known. Semantic information, by definition, is about something" (ibid). And thus according to Pattee in all living systems we must have one part of the system operating in a linguistic and time-independent mode (i.e. the DNA) and one part operating in a dynamic and time dependent mode (i.e. the protein system).

Pattee underlines that he is not suggesting a Cartesian dualism here but only a ‘descriptive dualism,’ for although a measuring process depends on choices which cannot be derived from laws, such choices are seen by Pattee as functions coded in DNA and ultimately generated by natural selection.

But this appeal to natural selection as the mechanism for bridging the Cartesian dichotomy between knower and known is not convincing. How could a purely mechanical process like natural selection possibly push non-knowing dynamical systems across the logical gap separating such systems from the realm of measurement and knowledge? Many people apparently entertain this illusion of natural selection as a mysterious bridge across the Cartesian Divide. But natural selection is either a selection in the true sense of this term in which case a selector (and thus measurement) must be presupposed for its working so that some kind of preference (the essence of selection) can be executed, or it is not a true selective process: in which case it cannot possibly explain how preferences can possibly arise in the midst of the physical world.

Like Bohr and later von Neumann, Pattee has taken a long and courageous step in facing that borderline paradox which necessarily must appear, whenever you subscribe to an ontology of natural law. By this expression I try to characterize the conception of the world, which Pattee formulates quite unambiguously in the very first sentence of the quote given above, where he says that ‘these [physical] laws describe all possible behaviors.’ The transfer of Bohr's thinking about complementarity to the realm of life processes doesn't seem to help much here. For if complementarity is thought of in ontological terms we are immediately brought back to the dualism Pattee explicitly rejected, but if complementarity is thought of in epistemological terms the claim for complementarity is reduced to the assertion that even though we cannot describe the semiotic dimension of the world in the same language we use to describe the dynamic aspects of the world, this is due to a deficiency of language or thought. The semiotic dimension of life is like glimmerings which we cannot reach behind, because we are totally enveloped by them.

I am aware that this figure of thought is quite widespread. Thus it seems related to the so-called ‘intentional stance’ suggested by philosopher Daniel Dennet, according to which we cannot understand the lives of people (or animals) without describing them as possessed by an unceasing intentionality. And even though this intentionality is illusionary, we cannot deal with the world without pretending it is real.

Rather than taking refuge in such powerless ideas of what for every one of us is life's deepest and most real content, I will suggest that we give up the ontology of natural law by following the lead of Charles S. Peirce who recommended that we see natural laws as themselves a result of a cosmic evolutionary process. "This supposes them not to be absolute, not to be obeyed precisely" (CP 6. 13)[6] and they cannot therefore be expected to explain exhaustively our world. In the Peircean vision nature's tendency to take habits comes first and explains the growth of all kinds of regularities in our universe such as those regularities which we account for by natural laws. Since a habit is essentially an interpretation[7] it follows that in the Peircean vision semiosis has primacy and acts by guiding efficient causation.

Whether one prefers the ontology of natural law or one prefers the Peircean cosmology is a matter of choice. To an author such as myself who was educationally socialized to believe in materialistic realism, the Peircean position was not easy to accept, and only accepted reluctantly because it offered what seemed to be the only escape route from the absurdities of the ontology of natural law.

Code-duality transgresses the Neumann-Pattee thesis by claiming that the linguistic or symbolic mode and the dynamic mode are both fundamentally semiotic modes. The distinction does not separate a semiotic mode from a non-semiotic or dynamic mode but rather posits two different kinds of semiotic coding. Thus semiotic processes characteristic of the ‘linguistic mode’ are based on digitally coded symbols, while the semiotic processes characterizing the dynamic mode are indexical or iconic and analogically coded. The analogically coded signs thus correspond to the myriads of topologically organized indexical and iconic semiotic processes in cells and organisms which incessantly coordinate body parts and their relation to environment and in so doing an analogically coded sign also is responsible for the interpretation and execution of the genomic instructions.

CODES IN CONTEXT

The code concept and the distinction between digital and analogic codes is not without ambiguities, partly because the term code is used differently in different disciplinary contexts, and partly because the use of the terms analogic and digital are themselves very context sensitive.

In the context of semiotics the term ‘code’ has been used in two quite different senses. Thus influenced by the information theory in the 1960s and 1970s the term code was taken to mean ‘a set of shared rules of interpretations’ (Thibault, 1998), i.e. the code was seen as a context-free set of rules for the encoding, transmission and decoding of information. This conception for instance underlies the central dogma of molecular biology, where genetic information is believed to be passively passed on from DNA to proteins according to more or less unambiguous rules. More recent findings have shown that the transcription of genes to mRNA is in fact highly dependent on the presence of a number of protein factors which themselves reflect the cellular or organismic context in which the transcription process takes place[8]. If, as often happens, the genetic code is used metaphorically to express the idea that genes ‘code for’ certain organismic traits (and not only for given proteins), we are of course still farther away from talking of codes in the precise information theoretical sense of the concept.

The term code in code-duality should not therefore be taken in the narrow sense of information theory. The codes operative in living systems, and thus in code-duality, are indeed codes in the sense of modern semiotics, where a code may be defined as "a semiotic resource—a meaning potential—that enable certain kinds of meanings to be made (in language, in the ways we dress, in our eating rituals, in the visual media, and so on) while others are not, or at least in that code" (Thibault, 1998). Thus, analogic codes are sets of signs whose reference to the signified object is based on iconic or indexical relations, while digital codes are sets of signs whose reference to the signified object is based on some sort of punctuation of the spatio-temporal continuum (e.g. the setting of a triplet reading frame) and which must accordingly be based on custom or convention (e.g. in the sense of evolution). Punctuations belong to descriptive systems, they assume a negation which cannot be performed by nobody, or, they assume the existence of somebody, i.e. an observing system. Thus digital codes represent a higher order structuring relative to analogic codes. Higher, here, is taken to mean ‘superimposed’ (so that in the example below, the threshold level of cAMP is superimposed on the gradual, analogic, relation between cAMP and lack of glucose).

Obviously, analogic codes may eventually become digitized by becoming part of higher order digital codes. In itself, for instance, a drawing of an owl is usually just an icon but in ancient Egypt such a drawing when presented in the context of hieroglyphs came to signify the phonetic value ‘m.’ Or a painting may become part of an exhibition and thereby become digitized into a representative for a given artistic epoch or style of painting so that its reference is now determined primarily by the setting of the exhibition and only secondarily by its original iconic power.

A fascinating example of an evolutionary digitization of an indexical relationship was suggested by the American biochemist Gordon Tomkins (Tomkins, 1975) and concerns a group of bacterial signal molecules which are sometimes called alarmones, because their accumulation in the cytoplasm signals stress. One example is a substance called cyclic AMP (cAMP), which accumulates in bacterial cells starved for glucose. A high concentration in the cell of cAMP induces the cell to start producing enzymes that metabolizes other kinds of sugars[9]. The interesting thing about the alarmones is that there is no molecular relationship between the signal molecule (the alarmone) and the process regulated by the signal, and this led Tomkins to call alarmones for metabolic symbols. A metabolic symbol was here defined as ‘a specific intracellular effector molecule which accumulates when a cell is exposed to a particular environment’ (ibid). Tomkins emphasized that the alarmones are metabolically unstable substances, which means that they will quickly disappear once the situation becomes better, and he further observed that a domain can be designated encompassing all the metabolic processes controlled by the same symbol.

Tomkins also suggested an evolutionary mechanism to account for the creation of this regulatory system. The key here is the first enzyme in the pathway for glucose degradation, an enzyme named glucose kinase. The function of this enzyme is to catalyze the transfer of a phosphate-group (P) from the cellular energy-carrier ATP (shorthand for Adenosine-Tri-Phosphate) to the glucose molecule.

ATP + glucose → ADP + glucose-P

(where ADP is shorthand for Adenosine-Di-Phosphate). This reaction serves to activate the glucose molecule for further degradation. Now, the point is, that the enzyme, also catalyzes a minor side reaction whereby two phosphate groups (a so-called pyrophosphate = PP) are stripped off the ATP molecule to give cyclic AMP:

ATP → P-P + cAMP

In glucose starvation, when there is no glucose in the cell, the enzyme will run out of substrate for its main reaction, which means that the other substrate for this reaction, ATP, will tend to accumulate in the cells, and this situation then causes the enzyme to start the minor side reaction, i.e. the degradation of ATP to cAMP, so that cAMP will start accumulating. Now, since cAMP and ATP are closely related compounds they tend to bind to the same regions of cellular proteins, and a high concentration of cAMP therefore implies that cAMP will displace ATP from its normal binding sites, and since ATP is the major energy-carrier moelcule of the cell this effectively blocks cellular energy consumption. Thus when the bacterium is low in its main energy source, glucose, this mechanism assures a much needed lowering of energy consumption.

Considered from above, what happens is that the concentration of cAMP has become a switch by which the cell can turn on and off its energy consumption. In the course of evolution, as the bacterial systems eventually learned to use cAMP, it became more and more detached from its original biochemical setting, namely as a release mechanism for specific transcription processes which provide mRNA strands for a series of enzymes needed for metabolic pathways involved in the degradation of non-glucose sugars.

As pointed out by the Danish biochemist Mogens Kilstrup, cAMP in this case is both an icon (as a specific molecular conformation), an index (for ATP), and a symbol for glucose starvation (Kilstrup, personal communication).

The transformation of analogic codings to digital codings through the evolutionary formation of new contextual settings is probably involved in many or all cases of biological emergence. Another interesting example of this kind of transformation is so-called quorum sensing whereby bacterial systems are capable of measuring their own density via the concentration of a signal molecule emitted by all the cells. The semiotics of this system was discussed by Luis Bruni (Bruni, 2002).

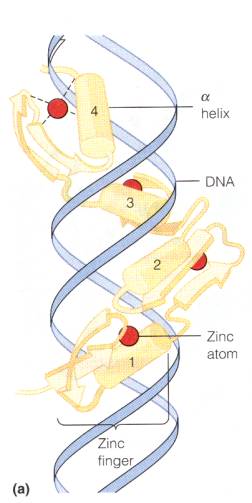

Digitization of analogic codings does not imply an elimination of the original analogic coding of course. Thus e.g. the digitality of the genetic sign systems presupposes a multitude of analogic codings whereby protein surfaces are fitted to bind to distinct spatial areas of the DNA helix, i.e. the protein conformations exhibit indexical relations to particular helical DNA sequences as exemplified in Figure 3, which shows the steric arrangement of the so-called ‘zink finger motif’ for binding of transcription factors to DNA. Thus, while DNA for a geneticist is a primarily sequential molecule, a biochemist will perhaps more often think of DNA as a spatially continuous structure.

Figure 3: Binding of a protein (a transcription factor) to DNA. So-called zink-fingers fit into the major groove of the DNA helix and make contact with and identify DNA sequences (adapted from (Mathews, van Holde et al. 1999)).

The punctuation, which is the core of digitality, is not inherent to the molecule or biological process itself (e.g. the sound of speech) but is always conferred upon it by evolution preparing a contextual setting. That a certain threshold level of cAMP in a given bacterial cell causes the induction of a series of sugar degrading enzymes, which were not produced until this particular threshold concentration was reached, can only be explained by an evolutionary process whereby one kind of reference (cAMP as an icon for ATP confusing enzymes to bind cAMP instead of ATP) becomes transformed to another kind of reference (cAMP as a symbol for glucose starvation). It seems likely that this process is not radically different from that process whereby a stylized drawing of an owl could enter the hieroglyphic alphabet in old Egypt and thereby attain the symbolic meaning ‘m.’ In both cases we have a transformation from index to symbol and it is indeed hard to see how a symbol could derive from anything less than indices (cf. Terrence Deacon's discussion of the origin of symbolic language in human evolution in Deacon, 1997)).

It was one of Gregory Bateson's greatest contributions to show that human or animal communication systems are deeply dependent on paralinguistic or paralogical settings. The concept of code-duality was meant as a tool for conceptualizing this theme as a general theme for evolution. The perpetual accumulation of new mutations in the genomes of the species of this world is of course necessary for the evolutionary process to proceed, but code-duality points to the necessity for a semiotic contextualizing of the process. Following Pasteur's famous saying that ‘only the well prepared will profit from good fortune’ I should like to suggest that evolution is not at all a result of blind mutations, but is caused rather by those semiotic integrations which allowed biosystems to profit from the eventual appearance of ‘lucky’ mutations.

REFERENCES

Bateson, G. 1979. Mind and Nature. A Necessary Unity. New York: Bantam Books.

Bruni, L. E. 2002. Does “Quorum Sensing” Imply a new Type of Biological Information. Sign Systems Studies. 30(1): 221-243.

Buss, L. 1987. The Evolution of Individuality. Princeton: Princeton Univ. Press.

Dawkins, R. 1989. (1976). The Selfish Gene. 2nd ed., Oxford: Oxford University Press.

Deacon, T. 1997. The Symbolic Species. New York: Norton.

Depew, D. L. and B. H. Weber. 1995. Darwinism Evolving: Systems Dynamics and the Genealogy of Natural Selection. Cambridge, MA: Bradford/The MIT Press.

Hoffmeyer, J. 2002. The Central Dogma: A Joke that became Real. Semiotica. 138(1): 1-13.

—.2001. S/E $ 1. A Semiotic Understanding of Bioengineering. Sign System Studies. 29(1): 277-291.

—.1996. Signs of Meaning in the Universe. Advances in Semiotics. Bloomington, IN: Indiana University Press.

—.1987. The Constraints of Nature on Free Will. in V. Mortensen and R. C. Sorensen. eds. Free Will and Determinism. pp.188-200. Aarhus: Aarhus University Press.

—.1985. I Legemets Ånd, Information, København. 16. October 1985

—.1984. Naturen i hovedet. Om biologisk videnskab. København: Rosinante.

—.1982. Samfundets naturhistorieKøbenhavn: Rosinante

Hoffmeyer, J. and C. Emmeche. 1991. Code-Duality and the Semiotics of Nature. in M. Anderson and F. Merrell eds. On Semiotic Modeling. pp.117-166. New York: Mouton de Gruyter.

Hull, D. 1981. Units of Evolution: A Metaphysical Essay. in U. J. Jensen and R. Harré eds. The Philosophy of Evolution. pp. 23-44. Brighton: Harvester Press.

Lewontin, R. C. 1992. The Dream of the Human Genome. The New York Review of Books. 31-40.

Mathews, C., K. E. van Holde and K. G. Ahern (1999). Biochemistry, Third Edition. San Fransisco: Addison Wesley.

Pattee, H. H. 1997. The Physics of Symbols and The Evolution of Semiotic Controls. in Santa Fe Institute Studies in the Sciences of Complexity, Proceedings Volume. Redwood City, CA: Addison-Wesley. Available Online. http://www.ssie.binghamton.edu/pattee/semiotic.html

—.1977. “Dynamic and Linguistic Modes of Complex Systems.” International Journal for General Systems. 3: 259-266.

—.1972. Laws and Constraints, Symbols, and Languages. in C. H. Waddington ed. Towards a Theoretical Biology. pp. 248-258. Edinburgh: University of Edinburgh Press.

Peirce, C. S. 1931-35. Collected Papers I-VI. in C. Hartstone and P. Weiss eds. Collected Papers I–IV. Cambridge, Mass.: Harvard University Press.

Polanyi, M. 1958. Personal Knowledge. London: Routledge.

Saussure, F. d. 1916. Cours de linguistique générale. Paris: Payot.

Sterelny, K. and P. E. Griffiths 1999. Sex and death. An Introduction to Philosophy of Biology. Chicago: University of Chicago Press.

Thibault, P. J. 1998. Code. in P. Bouissac ed. Encyclopedia of Semiotics. pp.125-129. Oxford: Oxford University Press.

Tomkins, G. M. 1975. The Metabolic Code. Science. 189: 760-763

von Neumann, J. 1955. The Mathematical Foundations of Quantum Mechanics. Princeton, NJ: Princeton University Press.